梯度下降法

梯度下降係機械學習常用嘅一階疊代最佳化演算法、用嚟搵到可微函數局部最細值(local minimum)。呢個惗法所用到嘅係函數嘅斜率,或者係更高維度(有多個自變數)函數嘅梯度(gradient)或者近似梯度(approximate gradient)、啲表示個函數上升方向(對於斜率)或者最急上升方向(對於梯度)嘅。梯度下降即係喺當前點,喺斜率或者梯度個相反方向上重複執行𨂾步,因為呢個係最急嘅下降方向。相反,沿斜率或者梯度方向𨂾步會最急噉逐步達到呢個函數個局部最大值,噉樣就係梯度上升(gradient ascent)或者話係爬山演算法[1]。

梯度下降通常歸因於Cauchy,佢喺1847年首次提出咗呢個觀點。[2] Hadamard喺1907年獨立噉提出咗一種類似嘅方法。[3] [4] Haskell Curry於1944年首先研究咗嗰種方法對於非線性最佳化問題嘅收斂性[5];跟住,呢個方法嘅相關研究日益深入,方法本身亦都喺跟尾嘅幾十年中得到使用。[6] [7] 呢種方法,又經常叫做最急/最坽下降法(steepest descent)。

描述

[編輯]

梯度下降係基於噉樣嘅觀察,若果個多變量函數喺一粒點嘅附近有定義同可微 ,係噉從去喺嘅負梯度方向上 下降最快。因此,若果對於一個夠細嘅有下式:

即有 。即係話,要從度劘走,因為要逆梯度噉落到個局部最細值。考慮埋呢個觀察結果,從斷估值開始搵局部最細,並考慮序迾係噉即有

由於有一個單調序迾

所以希望序迾收斂得到所需要嘅嗰個局部最細值。注意𨂾長個值允許喺每次疊代得到更改。借由喺函數上嘅某啲假設 (譬如話,凸函數同 Lipschitz式連續 ),與及特定揀出嘅 (譬如話,透過滿足Wolfe條件嘅線搜索,又或者透過Barzilai–Borwein方法[8] [9],如下所示),

函數就有保證收斂得到一個局部最細值。個函數若果係凸嘅話,噉所有啲局部最細值又即係全局最細值,係噉喺呢種情況下,梯度下降可以收斂得到個全局解。

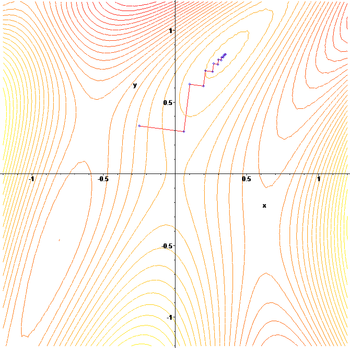

右邊幅附圖說明到呢個過程。嗰度假定係喺平面上定義嘅,而且佢個圖形係成碗狀。藍色曲線係啲等高線,即線上啲值係一樣嘅。紅色箭頭從一粒點出發表示呢粒點度嘅負梯度方向。注意,一粒點度嘅(負)梯度,正交於條經過佢嘅等高線。顯然,梯度下降會逐步指向隻碗嘅㞘底,喺嗰度函數個值係最細嘅。

例碼

[編輯]以下係用 Python 寫嘅一段梯度下降碼:

next_x = 6 # We start the search at x=6

gamma = 0.01 # Step size multiplier

precision = 0.00001 # Desired precision of result

max_iters = 10000 # Maximum number of iterations

# Derivative function

def df(x):

return 4 * x ** 3 - 9 * x ** 2

for _ in range(max_iters):

current_x = next_x

next_x = current_x - gamma * df(current_x) # 睇吓個斜率係點。

step = next_x - current_x

if abs(step) <= precision:

break

print("Minimum at ", next_x)

# The output for the above will be something like

# "Minimum at 2.2499646074278457"

直觀類比

[編輯]

以下個假設情景可以說明得到個梯度下降法後便嘅基本直觀解釋。一個人捱逼到喺山度並嘗試緊落山(即嘗試搵個全局最細值),但霧好大、能見度低之極。因此,落山條路係唔睇得到,係噉佢必須使用啲本地信息嚟搵到個最細值。噉樣即可以使用梯度下降嘅方法,包括睇返當前位置個坡嘅急度,然之後向最坽嘅下降方向(即落坡)前進。若果佢係嘗試要搵到山頂(即最高處)嘅,噉佢要朝最急嘅上升方向(即上坡)前進。用梯度下降嘅話,佢最終會搵到落山嘅路,或者可能韞喺某隻坑度(譬如話,局部最低點或者鞍點),譬如山底湖。但係,仲要假設埋透過簡單嘅觀察並唔立即睇得出山嶺嘅坽度,而係需要一種複雜嘅儀器嚟度量,而呢個人噉時碰啱擁有呢種儀器。用台儀器度山需要相當長嘅時間,因此若果佢想喺日落之前落得到山,佢應盡量減少使用呢台儀器。困難嘅點即係要減少使儀器但又避免行偏道,所以要好好噉揀返個頻率、佢量度個坡嘅坽度嘅。

呢種類比方法入便,人代表個演算法,而落山路徑代表參數設置嘅順序、個演算法要探索到嘅。山嶺嘅急度代表誤差表面個斜率(slope)喺粒點度。用嚟度量急度嘅儀器係微分(透過獲取呢粒點嘅平方誤差函數(squared error function)個導數可以計算得到誤差表面個斜率)。佢揀行進方向就對返呢粒點嘅誤差表面嘅梯度,佢喺進行另一次度量之前經過嘅時間就係𨂾長。

函數例

[編輯]梯度下降喺啲病態函數(pathological functions)方面會面臨到問題,譬如呢度顯示到嘅Rosenbrock函數。

Rosenbrock函數有一個迫窄嘅彎谷,其中包含有嗰個最細值。山谷嘅㞘底好平坦。由於條谷彎曲又平,個最佳化過程係以細𨂾長向個最細值之字噉行。

梯度下降法嘅之字步性質喺下低個例當中都好明顯,個應用到個方法嘅函數係:

揀𨂾長同下降方向

[編輯]由於使用𨂾長太細會減慢收斂,太大會導致分散(divergence),點樣搵到啱嘅係一個重要嘅實際問題。Philip Wolfe仲主張使用「clever choices of the [descent] direction」喺實踐當中。[10]雖然使用偏離最急下降方向嘅方向好似違反咗直覺,但個惗法係透過保持更長嘅距離就可以補償返個細斜率。

數學上說明,使用一個方向同𨂾長並考慮更加一般嘅更新:

- 。

搵啱嘅設置畀同需要一啲思考。首先,希望係個更新方向係指向落坡嘅。數學上,令表示同之間嘅角度,呢個要求。詳細講即需要關於最佳化嘅目標函數嘅更多信息。喺相當弱嘅假設下係連續可微得嘅,可以證明得到: [11]

-

(1)

呢條不等式意指,函數嘅減少程度可以確定得到,即睇返方括號兩䊆嘢大細。方括號度嘅第一項度量嘅係角度,係下降方向戥負梯度之間嘅角度。第二項度量嘅係速度,即梯度沿下降方向變化嘅速度。

原則上,不等式(1)可以喺同最佳化,嚟揀𨂾長同方向。問題係,評估方括號度第二項需要評估埋,而且額外嘅梯度評估通常好耗資源而且唔受歡迎 。一啲方法嚟解決呢個問題嘅有:

- 透過設置放棄clever嘅下降方向嘅優勢 ,然之後使用線搜索搵到啱嘅𨂾長,譬如滿足Wolfe條件嘅嗰啲。

- 假如話係兩次可微分得嘅,使用佢個Hessian矩陣估計,然之後透過最佳化不等式(1)揀返同。

- 假如話係Lipschitz ,使用佢個Lipschitz常數綁定,然之後透過最佳化不等式(1)揀返同。

- 幫 建立一個自定義模型,然之後透過最佳化不等式(1)揀返同。

- 喺函數上有更強嘅假設嘅話,譬如有凸性質,可以實現到先進啲嘅方法,譬如快速梯度下降。

通常,按照上述方法之一,可以保證收斂得到個局部最細值。函數若果係凸嘅,噉所有啲局部最細值亦即係啲全局最細值,係噉喺呢種情況下,梯度下降可以收斂得到全局解。

線性系統嘅解

[編輯]

梯度下降可用嚟求解線性方程組,

可以重新表述為二次最細化問題(quadratic minimization problem)。若果系統矩陣係實對稱而且正定(positive-definite)嘅,通常最細化嘅二次函數為

係噉有

對於一般嘅實數矩陣 ,線性最細二乘法定義

喺傳統嘅線性最細二乘法中使到歐幾里得範數幫實嘅同計,有:

呢個行搜索嘅最細化,嚟搵每次疊代局部最佳𨂾長嘅,對二次函數可以解析噉執行得到,而且針對局部最佳嘅顯式公式都可以噉樣知到。[6][13]

譬如話,對於實對稱同正定矩陣 ,一個簡單嘅演算法可以如下:[6]

避免每疊代兩次乘埋,注意到暗指 ,即畀到傳統演算法:[14]

呢個方法好少用嚟求解線性方程,共軛梯度法(conjugate gradient method)係最受歡迎嘅替代方法之一。梯度下降疊代嘅次數通常正比於系統矩陣嘅頻譜條件數(spectral condition number) (最大特徵值比上最細特徵值 )。之不過共軛梯度法嘅收斂性通常係由個條件數嘅平方根決定,係噉速度要快得多。兩種方法都可以透過預處理(preconditioning)受益;喺呢種情況下,梯度下降可能需要少啲假設喺個預處理器上。[14]

非線性系統嘅解

[編輯]梯度下降亦都可以用嚟求解非線性方程組。以下示例顯示到點樣使用梯度下降法求解三個未知變量x1 ,x2同x3 。呢個示例顯示咗梯度下降嘅一次疊代。

考慮非線性方程組

引入相關嘅函數

其中

係噉可以定義個目標函數:

嘗試將佢最細化。初步斷估,使用

已知

Jacobian矩陣藉由噉樣得出:

計算:

因此有:

必須搵到一個啱嘅,嚟令到滿足以下條式:

呢項工可以透過多種線搜索演算法當中任意一種來完成。其中可以斷估 得到

喺呢個值之下評估個目標函數,得出:

從減少到下一步嘅值,

係針對目標函數嘅一次大幅下降。跟尾啲𨂾步會進一步降低佢個值,直至搵到呢個系統嘅近似解。

評

[編輯]梯度下降可以喺任意多個維度嘅空間度發揮作用,甚至喺無限維度嘅空間度又得。喺後一種情況下,搜索空間通常係一個函數空間,而且計算要最細化嘅函數嘅Fréchet導數嚟確定下降方向。[7]

嗰種梯度下降、嗰種喺任何數量(至少係有限)嘅維度上都起到作用嘅,可以認為係Cauchy-Schwarz不等式嘅一種結果。文章證明,任意維度兩枚矢量共線嗰陣,佢哋內積(點積)嘅幅值(大細)會最大化。喺梯度下降嘅情況下,噉樣就係自變數啲調整(adjustments)嘅嗰個向量成比於啲偏導數個梯度向量嘅情況。

若果畀定函數喺唔同方向上啲曲率(curvature)非常之唔同,噉梯度下降可能需要多次疊代先至可以喺所需嘅精度計出一個局部最細值。對於此類函數,預處理可以改變空間嘅幾何形狀,係噉似同心圓一樣整出啲函數水平集(function level sets),進而解決得到太慢收斂嘅問題。但係,構造同應用預處理可能會喺計算上花銷好大。

梯度下降可以結合埋線搜索,搵到每次疊代中局部最優𨂾長。執行線搜索可能好耗時。相反,使用固定嘅細會產生曳啲嘅收斂性。

啲基於牛頓法同埋基於藉由共軛梯度法反演Hessian矩陣嘅方法可能係愈發好嘅替代方案。[15] [16]通常嚟講,呢類方法收斂嘅疊代次數少啲,但每次疊代嘅成本高啲。一個示例係BFGS方法,呢個方法𨂾親步都計一個矩陣,將矩陣乘埋梯度矢量嚟到好啲嘅方向,再結合仲要複雜嘅線搜索演算法,嚟搵到個「最佳」值。對於啲大型問題、其中電腦內存占主導嘅,應使用有限內存方法(譬如L-BFGS)代替BFGS或者最急下降。

梯度下降可以睇作係應用歐拉法求解常微分方程(ODE)成梯度流(gradient flow)。反過來,呢個方程式可以作為一種最佳控制器(optimal controller)[17]畀到個控制系統,其中係攞回輸形式畀出 。

模改

[編輯]梯度下降可以收斂返一個局部最細值,並喺鞍點週邊變慢。即使對於冇約束到嘅二次最細化(unconstrained quadratic minimization),梯度下降亦都會隨住疊代嘅進行而喺後續啲疊代當中變返之字形步進,噉樣就令到收斂緩慢。有好多種梯度下降嘅模改(modifications)已得到提出,嚟解決啲噉嘅缺陷。

快速梯度法

[編輯]Yurii Nesterov提出咗[18]一個簡單嘅模改,幫凸最佳化問題收斂得愈發快;呢種模改又得到咗進一步嘅一般化(generalized)。對於冇約束到嘅平滑問題(unconstrained smooth problems),呢個方法叫做快速梯度法(FGM)或者加速梯度法(AGM)。具體來講,若果微分函數係凸嘅,係Lipschitz ,而且唔假定到係強凸嘅(strongly convex),噉喺每𨂾步中生成到嘅嗰個目標值、目標值嘅誤差透過梯度下降法係有嘅複雜度 。使用Nesterov加速技術,誤差就會以嘅複雜度降低。[19]已知損失函數下降比率係最啱一階最佳化方法嘅。儘管如此,照舊有機會透過減細常數因子來改進個演算法。最佳化梯度法(OGM) [20]捉常數減少到1/2,係噉成爲咗一種最佳嘅一階方法攞嚟解決啲規模大嘅問題。[21]

對於受約束或者唔平滑嘅問題,Nesterov嘅FGM叫做快速近端梯度法(FPGM),係近端梯度法嘅加速版。

動量或者重球法

[編輯]為咗打破梯度下降嘅之字形,動量(momentum)或者重球(heavy ball)法使用咗一個動量項,類似於喺最細化函數值[6]上滑動嘅重球,或者牛頓動力學當中喺保守力場裏頭透過粘性介質嘅質量運動。[22]有動量嘅梯度下降識記得到個解嘅更新喺每次疊代當中,並將下一個更新確定為梯度同前個更新嘅線性組合而唔係好似完全依靠單純梯度噉。對於冇約束到嘅二次最細化,重球法嘅理論收斂速度界限係漸近噉相同於最佳共軛梯度法嘅。

呢個技術用嚟隨機噉梯度下降並作為一種反向傳播算法嘅擴展、啲攞嚟訓練人工神經網絡嘅。[23]

擴展

[編輯]梯度下降可以得到擴展嚟處理啲數學約束,噉嘅話係透過包含埋一個到啲約束個集合嘅投影嚟實現到。僅衹喺啲投影可以喺電腦上有效噉計算得到嗰陣,呢種方法先至可行。喺適當嘅假設下,呢個方法可以收斂得到。個方法係一種特定情況,屬於針對啲單調包含(inclusions)(包括凸規劃同埋變分不等式)嘅前向–後褪算法。[24]

睇埋

[編輯]考

[編輯]

- ↑ Hill Climbing Algorithms (and gradient descent variants) IRL 互聯網檔案館嘅歸檔,歸檔日期2020年3月27號,..

- ↑ Lemaréchal, C. (2012). "Cauchy and the Gradient Method" (PDF). Doc Math Extra: 251–254. 原著 (PDF)喺2018年12月29號歸檔. 喺2021年6月1號搵到.

- ↑ Hadamard, Jacques (1908). "Mémoire sur le problème d'analyse relatif à Véquilibre des plaques élastiques encastrées,". Mémoires présentés par divers savants éstrangers à l'Académie des Sciences de l'Institut de France. 33.

- ↑ Courant, Richard (January 1943). "Variational methods for the solution of problems of equilibrium and vibrations". Bull. Amer. Math. Soc. 49: 1–23. doi:10.1090/S0002-9904-1943-07818-4 –透過Project Euclid.

- ↑ Curry, Haskell B. (1944). "The Method of Steepest Descent for Non-linear Minimization Problems". Quart. Appl. Math. 2 (3): 258–261. doi:10.1090/qam/10667.

- ↑ 跳到去: 6.0 6.1 6.2 6.3 Polyak, Boris (1987). Introduction to Optimization (英文).

- ↑ 跳到去: 7.0 7.1 Akilov, G. P.; Kantorovich, L. V. (1982). Functional Analysis (第2版). Pergamon Press. ISBN 0-08-023036-9.

- ↑ Barzilai, Jonathan; Borwein, Jonathan M. (1988). "Two-Point Step Size Gradient Methods". IMA Journal of Numerical Analysis. 8 (1): 141–148. doi:10.1093/imanum/8.1.141.

- ↑ Fletcher, R. (2005). "On the Barzilai–Borwein Method". 出自 Qi, L.; Teo, K.; Yang, X. (編). Optimization and Control with Applications. Applied Optimization.第96卷. Boston: Springer. pp. 235–256. ISBN 0-387-24254-6.

- ↑ Wolfe, Philip (1969-04-01). "Convergence Conditions for Ascent Methods". SIAM Review. 11 (2): 226–235. doi:10.1137/1011036. ISSN 0036-1445.

- ↑ Bernstein. "On the distance between two neural networks and the stability of learning".

{{cite arxiv}}:|arxiv=required (help) - ↑ Haykin, Simon S. Adaptive filter theory. Pearson Education India, 2008. - p. 108-142, 217-242

- ↑ Saad, Yousef (2003). Iterative methods for sparse linear systems (第2版). Philadelphia, Pa.: Society for Industrial and Applied Mathematics. pp. 195. ISBN 978-0-89871-534-7.

- ↑ 跳到去: 14.0 14.1 Bouwmeester, Henricus; Dougherty, Andrew; Knyazev, Andrew V. (2015). "Nonsymmetric Preconditioning for Conjugate Gradient and Steepest Descent Methods". Procedia Computer Science. 51: 276–285. doi:10.1016/j.procs.2015.05.241.

- ↑ Press, W. H.; Teukolsky, S. A.; Vetterling, W. T.; Flannery, B. P. (1992). Numerical Recipes in C: The Art of Scientific Computing (第2版). New York: Cambridge University Press. ISBN 0-521-43108-5.

- ↑ Strutz, T. (2016). Data Fitting and Uncertainty: A Practical Introduction to Weighted Least Squares and Beyond (第2版). Springer Vieweg. ISBN 978-3-658-11455-8.

- ↑ Ross, I. M. (2019-07-01). "An optimal control theory for nonlinear optimization". Journal of Computational and Applied Mathematics (英文). 354: 39–51. doi:10.1016/j.cam.2018.12.044. ISSN 0377-0427.

- ↑ Nesterov, Yu. (2004). Introductory Lectures on Convex Optimization : A Basic Course. Springer. ISBN 1-4020-7553-7.

- ↑ Vandenberghe, Lieven (2019). "Fast Gradient Methods" (PDF). Lecture notes for EE236C at UCLA.

- ↑ Kim, D.; Fessler, J. A. (2016). "Optimized First-order Methods for Smooth Convex Minimization". Math. Prog. 151 (1–2): 81–107. arXiv:1406.5468. doi:10.1007/s10107-015-0949-3. PMC 5067109. PMID 27765996.

- ↑ Drori, Yoel (2017). "The Exact Information-based Complexity of Smooth Convex Minimization". Journal of Complexity. 39: 1–16. arXiv:1606.01424. doi:10.1016/j.jco.2016.11.001.

- ↑ Qian, Ning (January 1999). "On the momentum term in gradient descent learning algorithms" (PDF). Neural Networks. 12 (1): 145–151. CiteSeerX 10.1.1.57.5612. doi:10.1016/S0893-6080(98)00116-6. PMID 12662723. 原著 (PDF)喺8 May 2014歸檔. 喺17 October 2014搵到.

- ↑ "Momentum and Learning Rate Adaptation". Willamette University. 喺17 October 2014搵到.

- ↑ Combettes, P. L.; Pesquet, J.-C. (2011). "Proximal splitting methods in signal processing". 出自 Bauschke, H. H.; Burachik, R. S.; Combettes, P. L.; Elser, V.; Luke, D. R.; Wolkowicz, H. (編). Fixed-Point Algorithms for Inverse Problems in Science and Engineering. New York: Springer. pp. 185–212. arXiv:0912.3522. ISBN 978-1-4419-9568-1.

延伸閱讀

[編輯]- Boyd, Stephen; Vandenberghe, Lieven (2004). "Unconstrained Minimization" (PDF). Convex Optimization. New York: Cambridge University Press. pp. 457–520. ISBN 0-521-83378-7.

- Chong, Edwin K. P.; Żak, Stanislaw H. (2013). "Gradient Methods". An Introduction to Optimization (第Fourth版). Hoboken: Wiley. pp. 131–160. ISBN 978-1-118-27901-4.

- Himmelblau, David M. (1972). "Unconstrained Minimization Procedures Using Derivatives". Applied Nonlinear Programming. New York: McGraw-Hill. pp. 63–132. ISBN 0-07-028921-2.

連出去

[編輯]- 喺C ++,Boost,Ublas度使用梯度下降進行線性回歸

- 可汗學院嘅一系列視頻討論咗梯度上升

- 深度神經網絡環境下嘅喺線書教學梯度下降

- "Gradient Descent, How Neural Networks Learn". 3Blue1Brown. October 16, 2017 –透過YouTube.

![{\displaystyle \gamma _{n}={\frac {\left|\left(\mathbf {x} _{n}-\mathbf {x} _{n-1}\right)^{T}\left[\nabla F(\mathbf {x} _{n})-\nabla F(\mathbf {x} _{n-1})\right]\right|}{\left\|\nabla F(\mathbf {x} _{n})-\nabla F(\mathbf {x} _{n-1})\right\|^{2}}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a4bd0be3d2e50d47f18b4aeae8643e00ff7dd2e9)

![{\displaystyle F(\mathbf {a} _{n+1})\leq F(\mathbf {a} _{n})-\gamma _{n}\|\nabla F(\mathbf {a} _{n})\|_{2}\|\mathbf {p} _{n}\|_{2}\left[\cos \theta _{n}-\max _{t\in [0,1]}{\frac {\|\nabla F(\mathbf {a} _{n}-t\gamma _{n}\mathbf {p} _{n})-\nabla F(\mathbf {a} _{n})\|_{2}}{\|\nabla F(\mathbf {a} _{n})\|_{2}}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1433794e842e36580db8fa219ae5ca650010d6d6)

![{\displaystyle \max _{t\in [0,1]}{\frac {\|\nabla F(\mathbf {a} _{n}-t\gamma _{n}\mathbf {p} _{n})-\nabla F(\mathbf {a} _{n})\|_{2}}{\|\nabla F(\mathbf {a} _{n})\|_{2}}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/982e8508a3147c2ba4e45b7f88fdda4727d13699)

![{\displaystyle F(\mathbf {x} )={\frac {1}{2}}G^{\mathrm {T} }(\mathbf {x} )G(\mathbf {x} )={\frac {1}{2}}\left[\left(3x_{1}-\cos(x_{2}x_{3})-{\frac {3}{2}}\right)^{2}+\left(4x_{1}^{2}-625x_{2}^{2}+2x_{2}-1\right)^{2}+\left(\exp(-x_{1}x_{2})+20x_{3}+{\frac {10\pi -3}{3}}\right)^{2}\right],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/31ebfa155b6d0cdef7771ecacf28d5179dd9b111)